AI is scaling fast.

But as systems become more capable, one challenge becomes increasingly clear:

Efficiency.

Not just in compute — but across the entire data path.

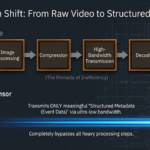

In today’s architectures, massive amounts of raw data are continuously generated, transmitted, and processed across multiple layers — from sensors to memory to processors.

This movement consumes significant power, introduces latency, and adds system complexity.

As AI expands into real-world environments — from wearables to robotics, automotive, and edge devices — this model becomes harder to sustain.

A natural evolution is emerging:

Bringing computation closer to where data originates.

At EyeChip, we believe the most effective place to start is the sensing layer.

Instead of transmitting continuous streams of raw visual data, our approach processes eye signals directly on-chip and outputs structured, meaningful information in real time.

This reduces unnecessary data flow, lowers power consumption, and enables more scalable AI systems at the edge.

This is not just an incremental improvement.

It is a shift toward more efficient AI system design — where intelligence begins at the point of data creation.

As the industry continues to evolve, the focus on efficiency will only deepen.

And the architectures that succeed will be those that rethink not just how we compute — but where computation begins.

#EdgeAI #Semiconductor #AIInfrastructure #XR #HumanMachineInteraction #LowPowerAI #NextGenAI